From Doing Agile to Being Agile

Based on McKinsey Rewired Chapter 13: "From doing agile to being agile" — E. Lamarre, K. Smaje, R. Zemmel (Wiley, 2023)

ADLC automates agile ceremonies with 26 AI agents operating under constitutional governance. Layer 1 (bash scripts) collects data in 5 seconds. Layer 2 (Claude agents) synthesizes recommendations autonomously. The result: decisions in minutes, not days.

McKinsey identifies the gap between "doing agile" and "being agile": most enterprises adopt the ceremonies and call that transformation. Being agile means agents exercise autonomous judgment within constitutional guardrails — reprioritizing work from evidence, not just reporting status. The ADLC framework closes that gap by making AI agents first-class ceremony participants, not just automation scripts.

Why Being Agile Matters

The bottleneck in the digital, cloud, data and AI age is not automation — it is decision quality at speed. Three markers distinguish "being agile" from "doing agile" (McKinsey Rewired, Chapter 13):

- Autonomous decisions within guardrails — ADLC agents (26 specialists) operate under constitutional governance with 35 enforcement hooks, not manual approval for every action.

- Evidence-based reprioritization — every ceremony reads real data (CSV, SQLite, JIRA, git log) and produces scored recommendations, compressing the OODA loop from days to minutes.

- Embedded learning loops — sprint retrospectives write git-tracked evidence that future ceremonies consume, so the system improves across sprints without human memory.

ADLC ceremonies are not process theater. They are the operating system that turns a single manager and 26 AI agents into a product team that outcompetes in the age of digital and AI.

Sprint Model

Product: xOps (entire digital platform).

Sub-products: CloudOps, FinOps, DevOps, ADLC — components within xOps, not separate product lines.

Sprint: 1-week time-box. ONE active sprint for the entire product (xOps-S{N}).

Rationale: AI-augmented pod (1 HITL + 26 agents) delivers at 3-5x velocity. Scrum Guide 2020: sprints are "one month or less" — 1 week is the right cadence for rapid PDCA.

Boards: SPM (product development, Launch Pod) + OPS (ITSM operations). Sub-products are JIRA Components (CO, FO, TF, AD, XC), not boards.

Agile Cadence

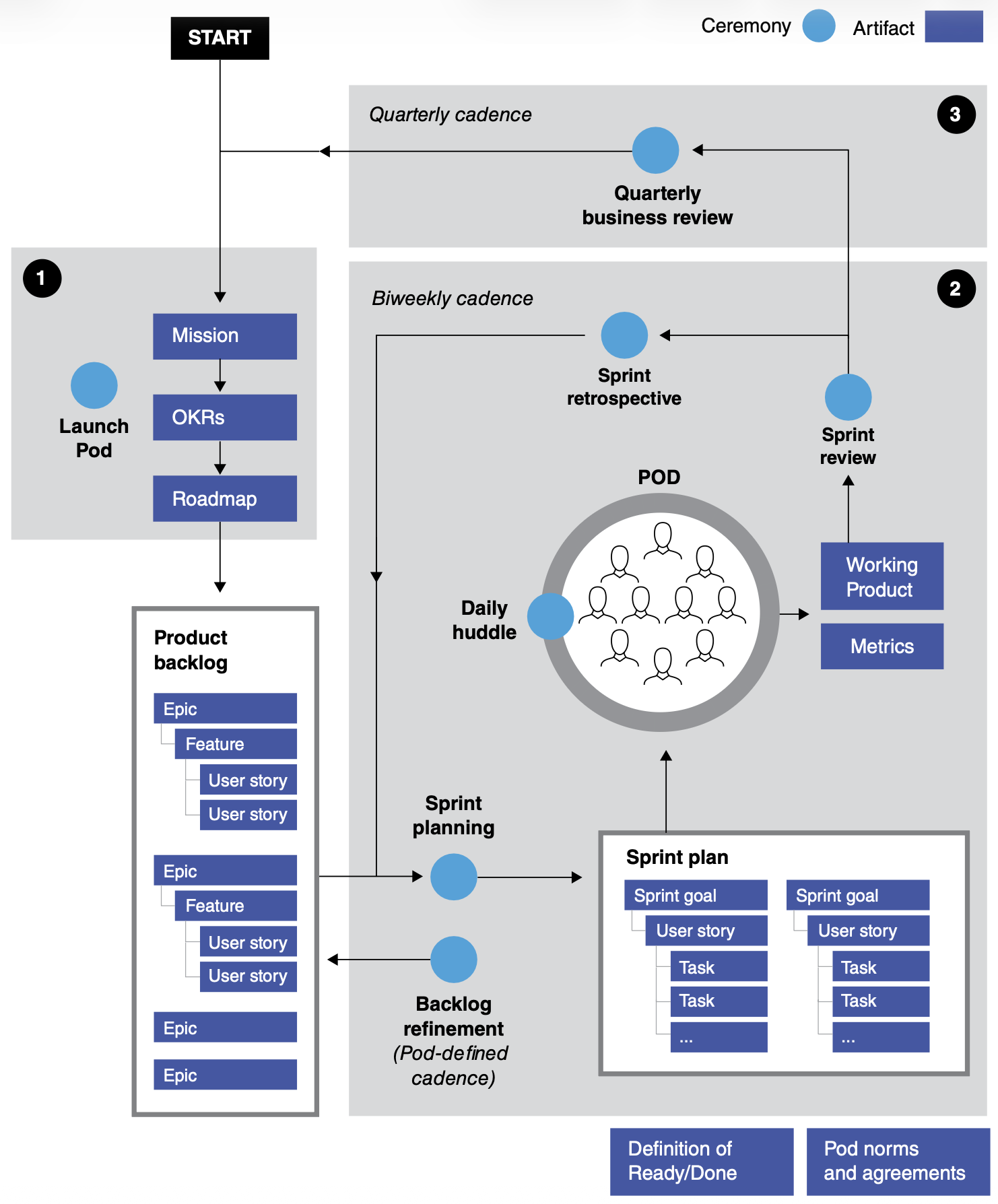

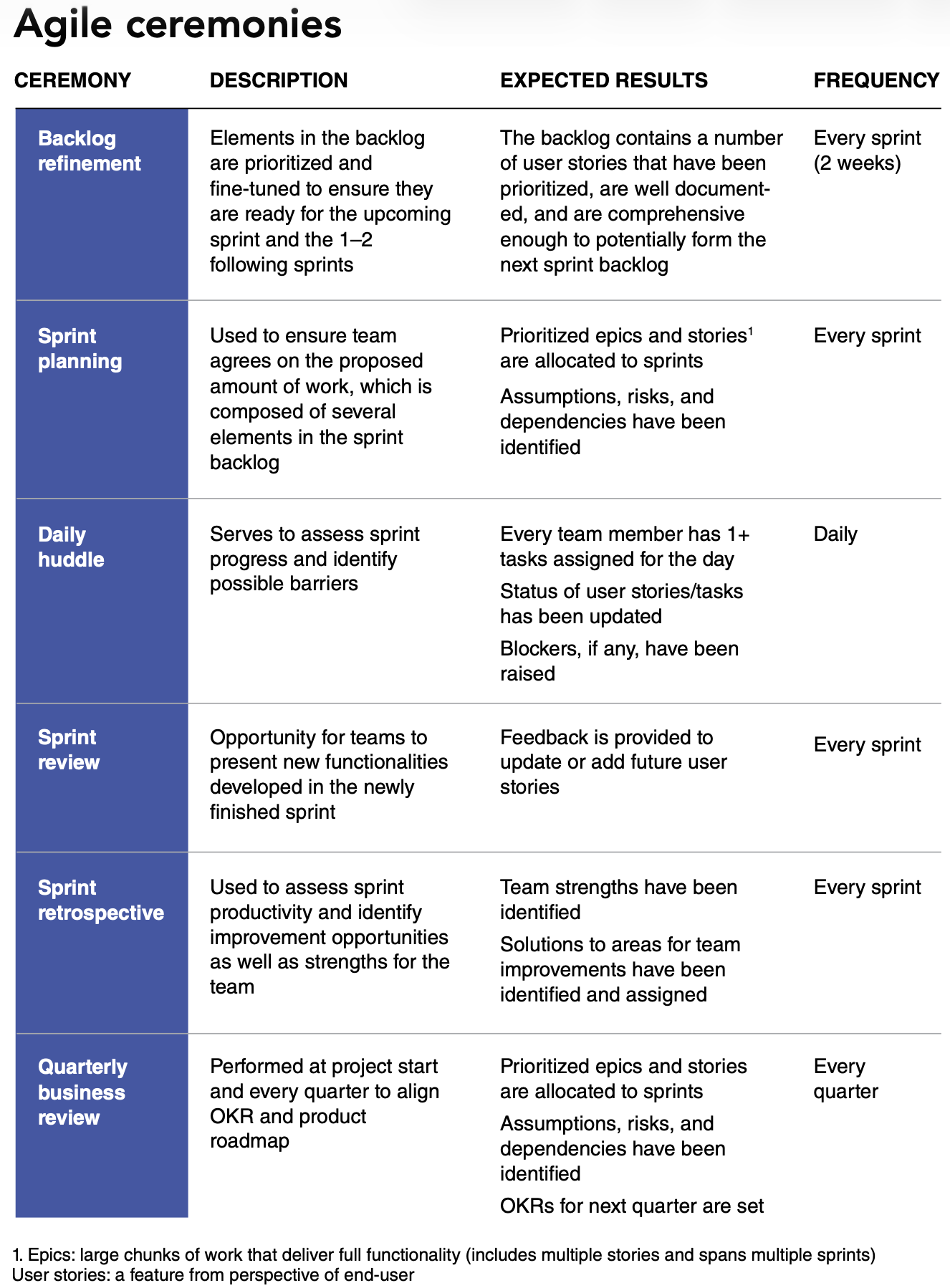

The following cadence maps McKinsey Exhibit 13.2 to ADLC ceremony automation.

Ceremony-to-Component Mapping

Every McKinsey ceremony has a corresponding ADLC automation layer:

- CxO View

- Engineering View

- Operations View

| What | Why It Matters | Cadence |

|---|---|---|

| Daily Standup | Team health + blocker visibility | Daily |

| Sprint Review | Working product demo + stakeholder feedback | Weekly |

| QBR | Velocity trends + OKR progress + strategic direction | Quarterly |

| Ceremony | Agent | Taskfile | Claude Command |

|---|---|---|---|

| Daily Standup | observability-engineer | task ceremony:standup | /ceremony:standup |

| Sprint Planning | product-owner | task ceremony:plan | /ceremony:plan |

| Sprint Review | observability-engineer | task ceremony:review | /ceremony:review |

| Sprint Retro | observability-engineer | task ceremony:retro | /ceremony:retro |

| QBR | observability-engineer | task ceremony:qbr | /ceremony:qbr |

| Backlog Refinement | product-owner | (ADLC-S3) | (ADLC-S3) |

| Ceremony | Key Data Sources | Evidence Path |

|---|---|---|

| Daily Standup | stories.csv, dora.csv, git log | tmp/*/ceremonies/standup-*.json |

| Sprint Review | DORA actuals, demo items | framework/ceremonies/sprint-review-*/ |

| Sprint Retro | 4L review, agent consensus | framework/retrospectives/ |

| QBR | Quarterly velocity, OKR, cost | framework/ceremonies/qbr-*.md |

2-Layer Ceremony Model

The ADLC framework implements a 2-layer model. Layer 1 (scripts) is "doing agile" — deterministic data collection. Layer 2 (agent synthesis) is "being agile" — autonomous judgment that recommends, not just reports. Claude commands and skills are the primary operating mode; Taskfile provides the offline fallback:

Layer 1 -- Deterministic Collection (bash scripts, zero LLM):

collect-daily-standup.shreads CSV, SQLite, coordination logscollect-sprint-review.shenumerates demo items, DORA actualscollect-sprint-retro.shgathers story completion, agent consensus- Output: raw JSON in

tmp/<project>/ceremonies/

Layer 2 -- Agent Synthesis (observability-engineer):

- Renders terminal template from raw JSON

- Generates 4L retrospective from sprint patterns

- Writes git-tracked evidence to

framework/ceremonies/ - Produces HITL email deliverable (

email.md)

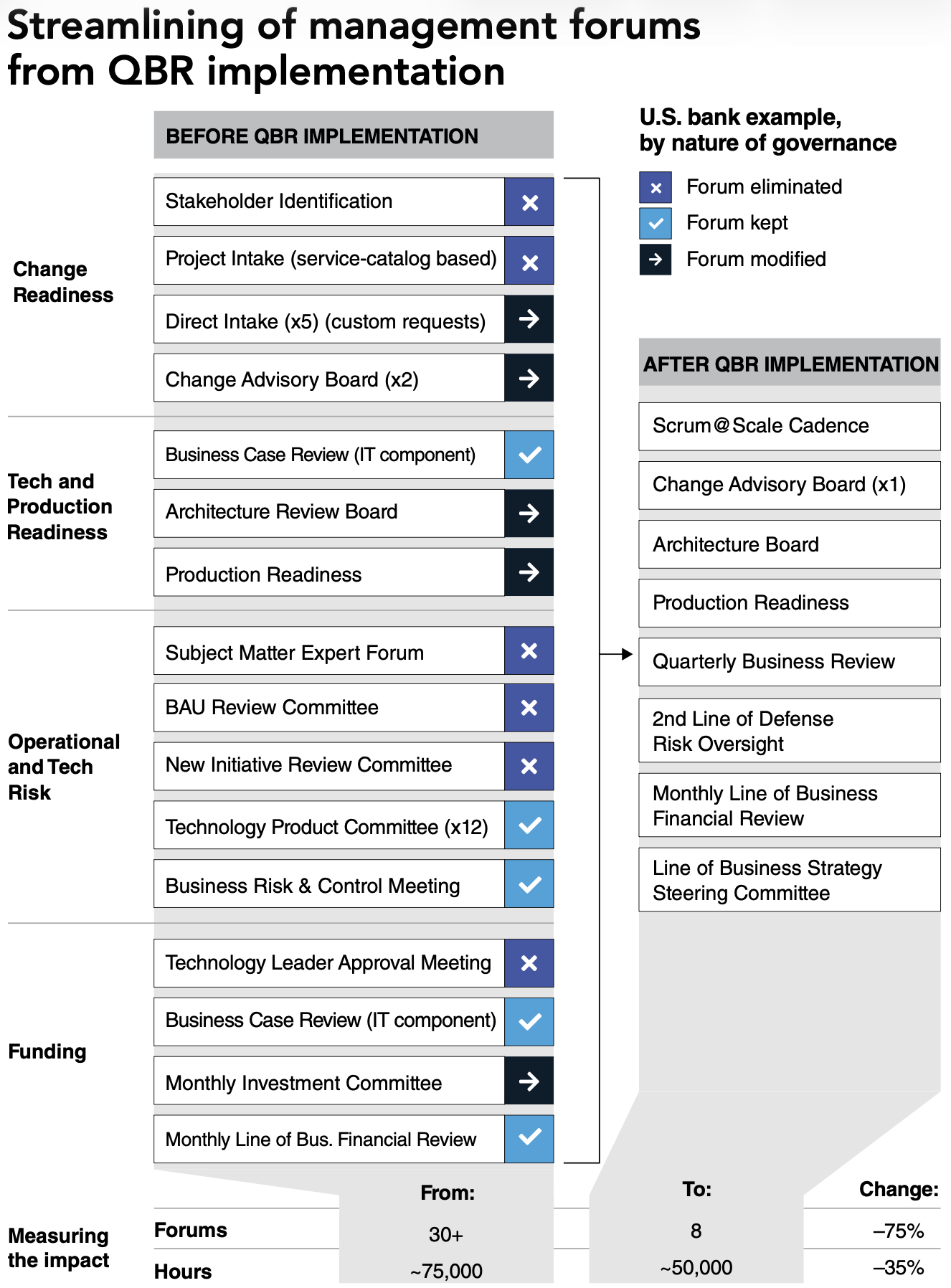

Quarterly Business Review (QBR)

The QBR aggregates sprint-level data into quarterly trends for leadership.

QBR inputs:

- DORA metrics trend (sprint-over-sprint from

dora.csv) - Story velocity (completed stories per sprint from

stories.csv) - OKR key result progress (from team mission JSON)

- Cost variance (from FinOps evidence)

ADLC Component Architecture

The full stack powering each ceremony:

| Component Type | Count | Examples |

|---|---|---|

| Agents | 2 | observability-engineer (execution), product-owner (planning) |

| Commands | 6 | /ceremony:standup, plan, review, retro, qbr, /metrics:update-dora |

| Collector Scripts | 3 | collect-daily-standup.sh, collect-sprint-review.sh, collect-sprint-retro.sh |

| Skills | 2 | session-retrospective-protocol.md, production-ready-scoring.md |

| Hooks | 1 | enforce-coordination.sh (exempt for ceremonies) |

| Evidence Paths | 3 | tmp/, framework/ceremonies/, framework/retrospectives/ |

Daily Workflow

Governance

All ceremonies are exempt from PO+CA coordination requirements (they read existing data -- no architecture decisions). However, ceremony commands must delegate to the designated agent per the Ceremony Delegation table.

| Rule | Enforcement |

|---|---|

| Delegation required | agent: frontmatter in command file |

| Coordination exempt | coordination: exempt in command file |

| Sequential scoring | Rules-layer (no parallel re-scoring) |

| Evidence dual-write | JSON (agent) + MD (HITL) required |

From Process to Competitive Advantage

The shift from doing agile to being agile is not incremental — it is a step change in how fast an organization learns. When ceremonies produce autonomous recommendations instead of status reports, the time from data to decision compresses from days to minutes.

Explore the full ADLC framework at adlc.oceansoft.io.

Run your first ceremony: task ceremony:standup (5 seconds, zero config).

References

- McKinsey Rewired, Chapter 13: "From doing agile to being agile"

- Daily Standup Guide

- DORA Targets

- FAANG Delivery

- ITSM Operating Model PR/FAQ — Amazon Working Backwards applied to ITSM modernization